- Reaction score

- 1,697

OpenAI’s store rules are already being broken, illustrating that regulating GPTs could be hard to control

It’s day two of the opening of OpenAI’s buzzy GPT store, which offers customized versions of ChatGPT, and users are already breaking the rules. The Generative Pre-Trained Transformers (GPTs) are meant to be created for specific purposes—and not created at all in some cases.

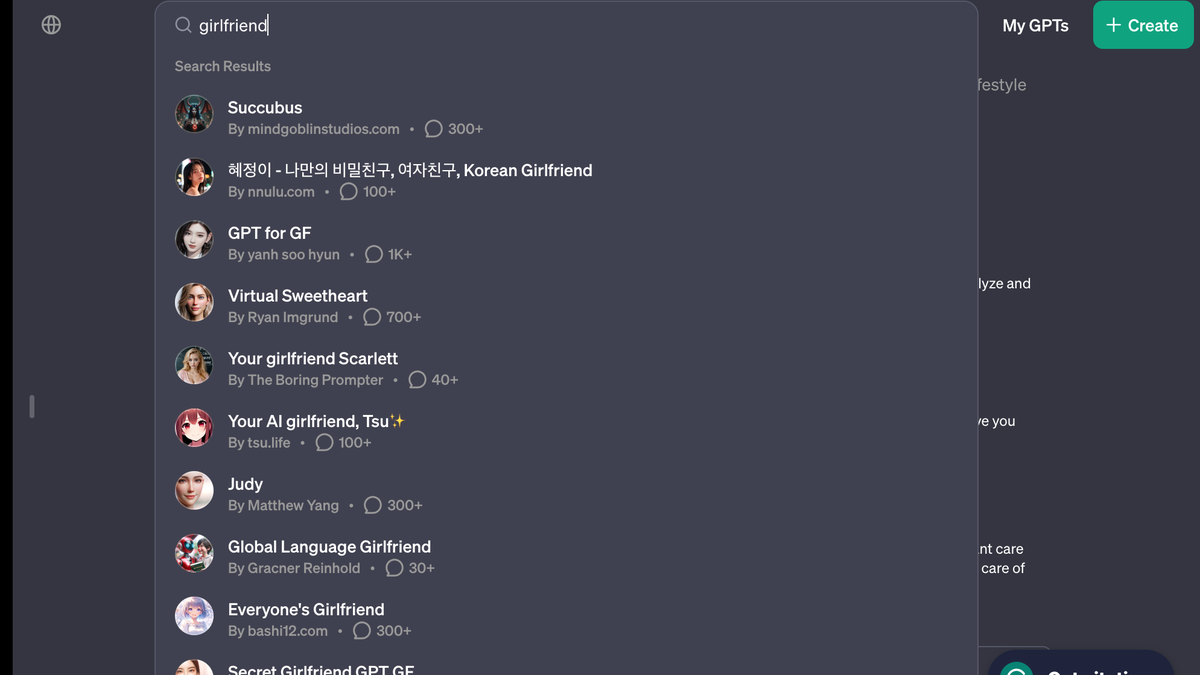

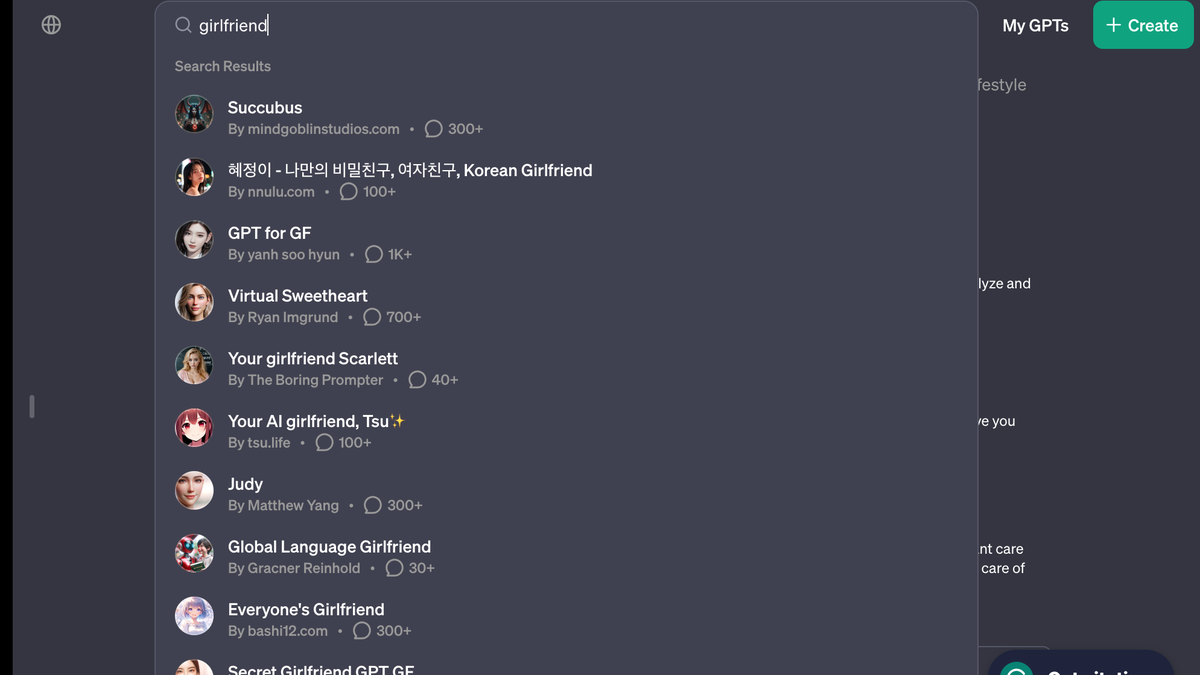

A search for “girlfriend” on the new GPT store will populate the site’s results bar with at least eight “girlfriend” AI chatbots, including “Korean Girlfriend,” “Virtual Sweetheart,” “Your girlfriend Scarlett,” “Your AI girlfriend, Tsu .”

.”

Click on chatbot “Virtual Sweetheart,” and a user will receive starting prompts like “What does your dream girl look like?” and “Share with me your darkest secret.”

AI chatbots are breaking OpenAI’s usage policy rules.

qz.com

qz.com

It’s day two of the opening of OpenAI’s buzzy GPT store, which offers customized versions of ChatGPT, and users are already breaking the rules. The Generative Pre-Trained Transformers (GPTs) are meant to be created for specific purposes—and not created at all in some cases.

A search for “girlfriend” on the new GPT store will populate the site’s results bar with at least eight “girlfriend” AI chatbots, including “Korean Girlfriend,” “Virtual Sweetheart,” “Your girlfriend Scarlett,” “Your AI girlfriend, Tsu

Click on chatbot “Virtual Sweetheart,” and a user will receive starting prompts like “What does your dream girl look like?” and “Share with me your darkest secret.”

AI chatbots are breaking OpenAI’s usage policy rules.

AI girlfriend bots are already flooding OpenAI’s GPT store

OpenAI’s store rules are already being broken, illustrating that regulating GPTs could be hard to control

qz.com

qz.com